I recently attended a design conference where several presenters confidently asserted that since emotions are an inherently embodied experience they are thus not possible for artificial intelligences to experience because they don’t have bodies. And thus we should stop trying to design software that understands, experiences or evokes emotion.

But to me this observation suggests not that digital intelligences wouldn’t be able to experience emotions, but that their emotions would be emergent properties of their inhuman bodies made up of the various, microphones, cameras, and other sensors (sensoria?) streaming data from the built environment into them. And as such these emotions will not readily map onto our own emotions rooted in our gut feelings of anxiety, or our tight shoulders of stress, or the prickly feeling of fear on the back of our neck, or even the warm glow of blood transfusing muscle radiating out from our heart.

What emotions will an AI feel with its fingertips simultaneously inside a volcano and coldest glacier? When it comes to sense the air quality or ultraviolet radiation, or CO2 density or air temperature shifting over time? What emotions will your house feel as it senses the weight of the milk on the weight sensitive shelf in your fridge growing less and less. What emotions will the city AI feel as it looks out across itself with a thousand eyes?

Our emotions weren’t invented. They were adaptive, emergent properties that helped us make sense of our experiences, made up, at their most basic as streams of information, data, streaming into each brain through nerves that behave an awful lot like wires. It seems to me that an artificial intelligence’s emotions, if they have them, will similarly emerge from the experience of their senses as embedded in their bodies –that is, the bodies we are creating for them by hooking up all these senses for them all over our planet. I am afraid we will find these kinds of emotions very hard to grasp, accustomed as we are to the bounded set of our bodies’ senses. That is until we start expanding our own sensoria.

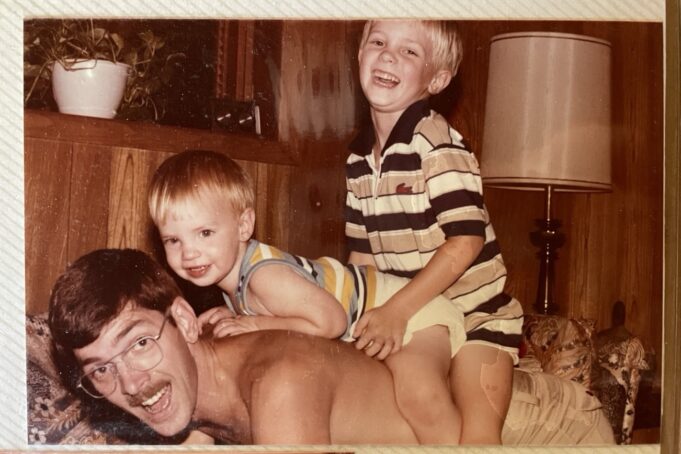

That is in part what I’m working on in a small way with my little project to take the data stream from a continuous glucose monitor my daughter wears and route it out into the internet and then back down to me and in through my own sense of touch. I want to be able to reach out with my attention from anywhere in the world and feel what her metabolism is doing so I can care for her diabetes and keep her safe. When I do, I feel several emotions: concern, fear, anxiety, reassurance, connection and love.